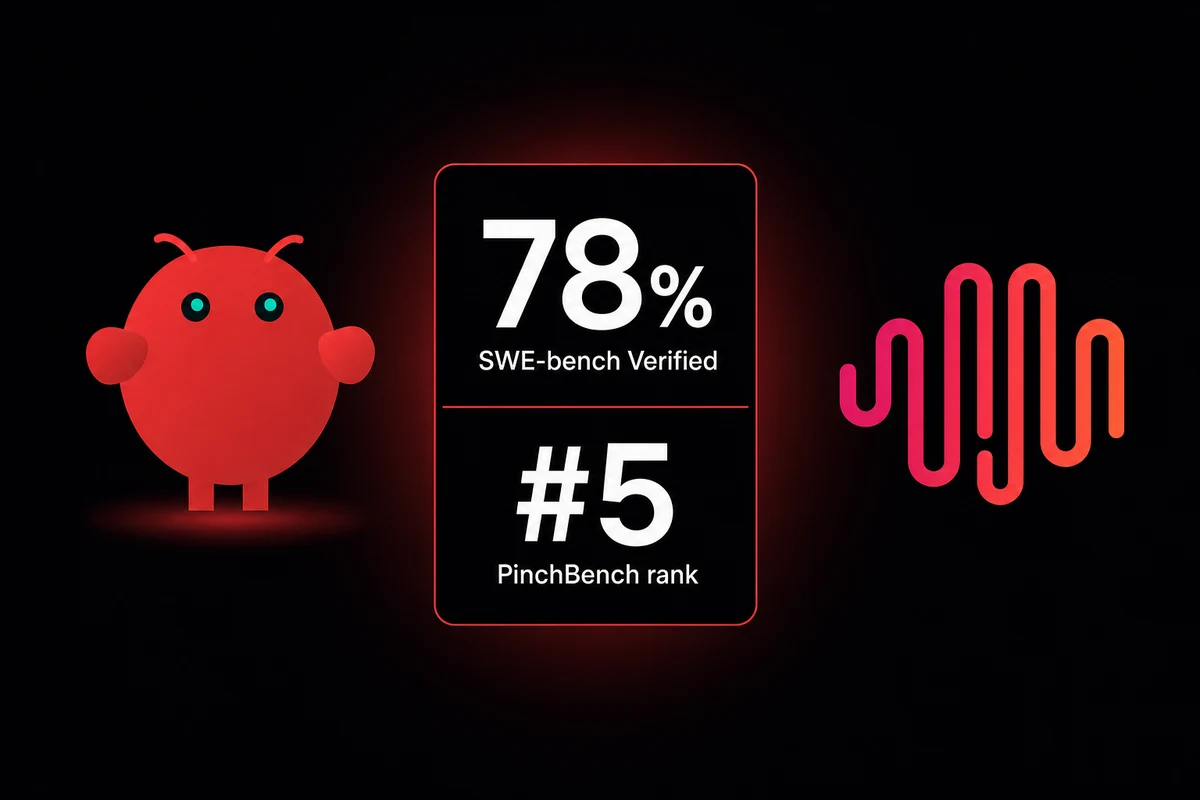

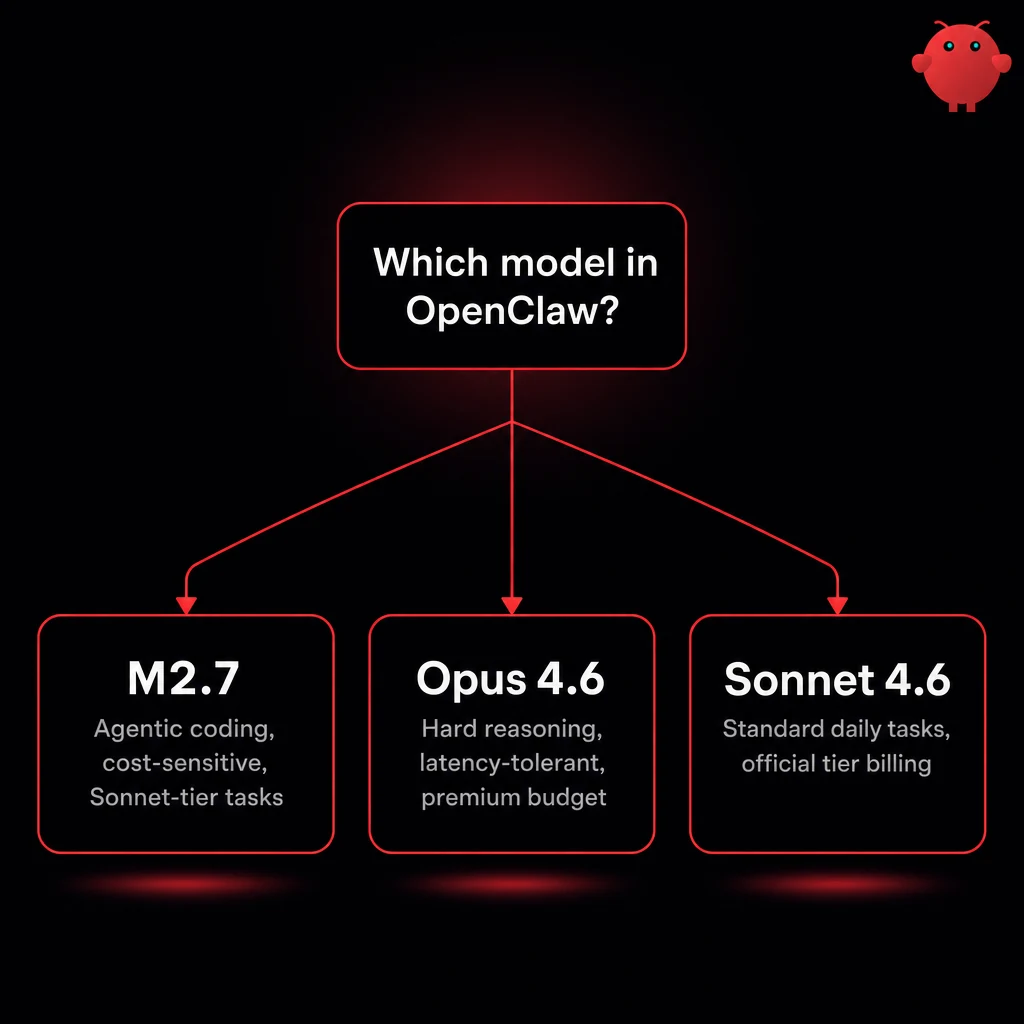

TL;DR: MiniMax M2.7 is a 230B-parameter open-weight model (10B active, 200K context) that runs inside OpenClaw 2026.1.12+ via the minimax provider. At $0.30/M input and $1.20/M output it is 10-20x cheaper than Opus 4.6, scores 78% on SWE-bench Verified against Opus 4.6's 55%, and ranks #5 of 50 on Kilo's PinchBench. It is a real Sonnet replacement. It is not Opus.

The tradeoffs land in three places. License: M2.7 shipped under Modified-MIT, not the standard MIT used in M2.5, so commercial use technically requires written authorization from MiniMax. Verbosity: outside testing clocks ~87M output tokens in test runs, four times the tier median. Hardware: the smallest usable quant is 108GB and pins you to a 128GB Mac or an H100.

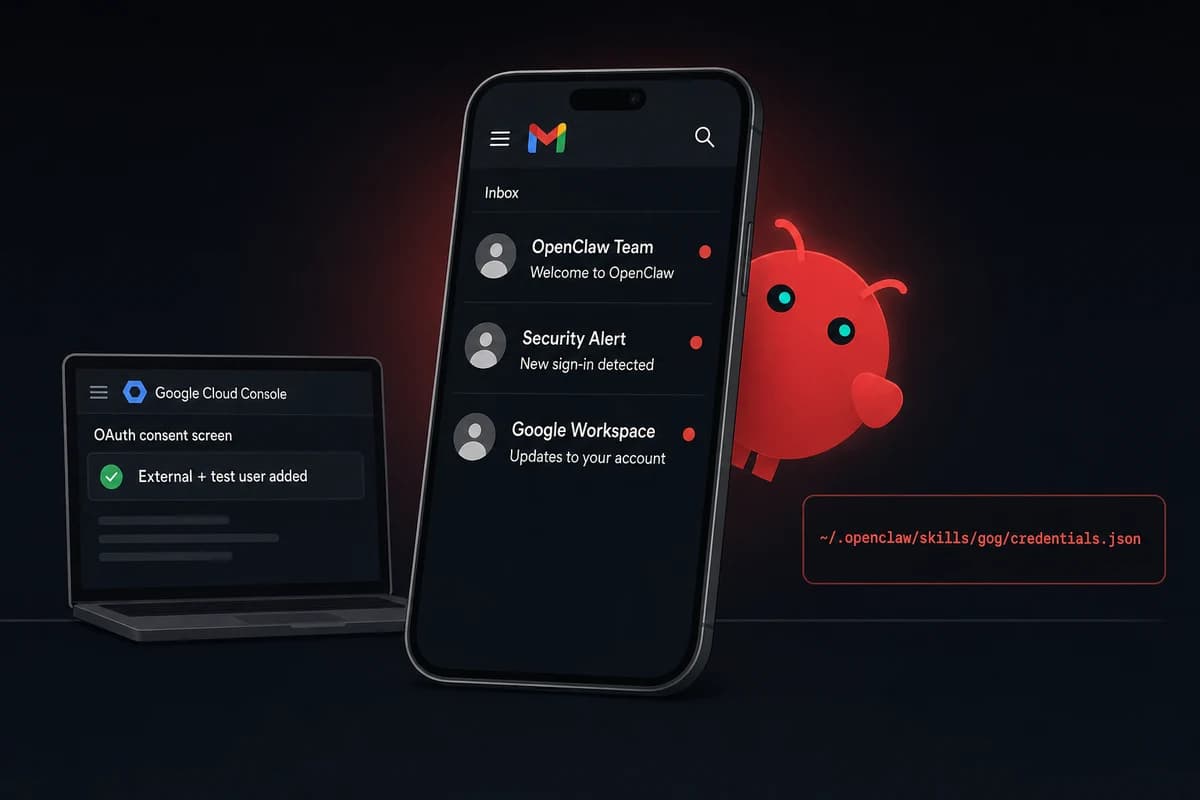

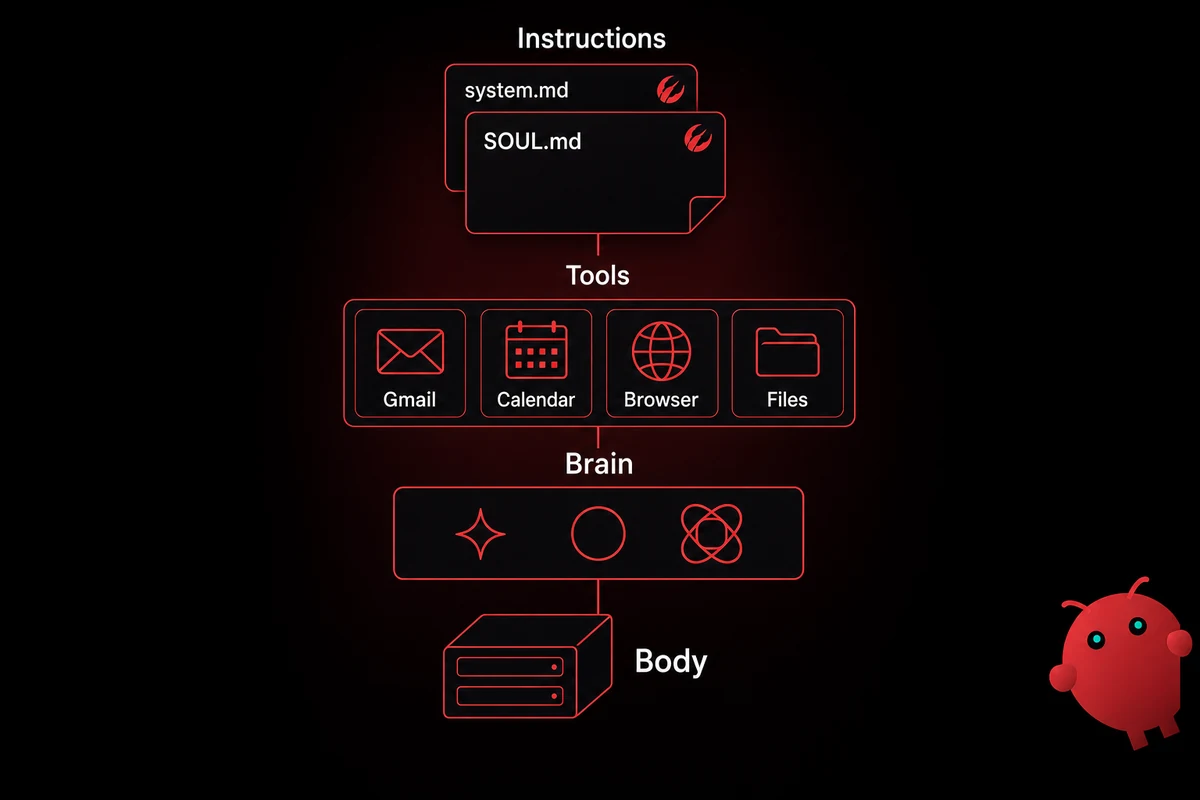

For OpenClaw users the path of least resistance is the API. openclaw configure adds the minimax provider, then OAuth via minimax-portal-auth or a raw API key. M2.7 is in the catalog from 2026.1.12 onward.

This MiniMax M2.7 review is the first non-Anthropic, non-OpenAI option I have considered for daily coding work in OpenClaw.

Benchmarks: what the third-party numbers say

MiniMax M2.7 wins or matches Claude Opus 4.6 on independent coding benchmarks. Kilo's PinchBench lands it at #5 of 50 with 86.2%, a hair behind Opus's 87.4%. Artificial Analysis puts the Intelligence Index at 50, ranked #7 of 81 against a tier median of 28.

| Benchmark | M2.7 | Claude Opus 4.6 | Source |

|---|---|---|---|

| SWE-bench Verified | 78% | 55% | Wavespeed, HuggingFace |

| SWE-Pro | 56.22% | not published | MiniMax (vendor-reported) |

| VIBE-Pro | 55.6% | similar tier | HuggingFace model card |

| PinchBench | 86.2% (#5) | 87.4% (leader) | Kilo Code, blog.kilo.ai |

| GDPval-AA ELO | 1495 | not in open-weight class | Artificial Analysis |

| Intelligence Index | 50 (#7 of 81) | not directly comparable | Artificial Analysis |

Table: Benchmark results for MiniMax M2.7 versus Claude Opus 4.6.

Where the numbers don't tell the whole story

The model is chatty. Artificial Analysis logged ~87M output tokens for its full evaluation, four times the tier median. Output speed sits at 43-47 tok/s on standard tier against a 95.8 tok/s median; time to first token runs 1.93-2.49s versus 1.84s median.

One bright spot: hallucination rate of 34%, against 46% for Sonnet 4.6 and 50% for Gemini 3.1 Pro. M2.7 is verbose but not making things up at peer rates.

My benchmark results in OpenClaw

I ran M2.7 inside OpenClaw 2026.1.13 on a Pro-tier VPS earlier this month, OAuth'd via minimax-portal-auth. Three coding tasks plus a long-context probe.

Task one: a single-file React app with drag-drop priorities, a countdown, and dark mode. M2.7 produced a working scaffold on the first prompt and self-corrected the drag-drop bug on review. Roughly 18,000 input against 72,000 output.

Task two: refactor a 600-line module where I had left half the helpers unannotated on purpose. M2.7 worked it but kept restating large blocks inside its plan. Realized cost: 2.5-3x cheaper than Opus, not 10-20x.

The long-context probe surprised me. At ~120K tokens of accumulated context M2.7 still picked the right helper.

One failure: the first refactor attempt was wrong because I under-specified the contract. M2.7 needs more explicit scaffolding than Sonnet, but the hand-holding cuts the agent loop earlier. Plan for adding scaffolded prompts when you swap in M2.7.

Skipping a weekend of catalog and version setup is the actual upgrade. OpenclawVPS provisions the stack in 47 seconds, EU regions, gateway preinstalled, OpenClaw on a current version. Plans start at $19/month.

Pricing and the "10x cheaper than Opus" math

Standard tier costs $0.30/M input and $1.20/M output. Highspeed doubles both for ~100 tok/s instead of ~45. Cache reads run at $0.06/M, which matters: agentic loops re-read context, and the cache tier cuts those repeats roughly 5x.

| Tier | Input $/M | Output $/M | Throughput |

|---|---|---|---|

| Standard | $0.30 | $1.20 | 43-47 tok/s |

| Highspeed | $0.60 | $2.40 | ~100 tok/s |

| Cache reads | $0.06 | n/a | n/a |

| Cache writes | $0.375 | n/a | n/a |

Table: MiniMax M2.7 pricing tiers and throughput.

Realized cost-per-task lands closer to 2.5-3x cheaper than Opus, not the headline 10-20x. That is the verbosity gotcha, and the limit on how cheap M2.7 actually gets.

The cost story still favors M2.7 where Opus billing compounds. Standard tier 24/7 use lands near $2,000/year against $23K-$39K for an Opus-equivalent workload.

I run standard tier; highspeed only earns its price when latency matters more than per-token cost.

Setting up M2.7 in OpenClaw (the actual config)

OpenClaw 2026.1.12 ships the minimax provider with M2.7 in the catalog. Older versions return "Unknown model" until upgraded. Three steps.

- Upgrade to OpenClaw 2026.1.12+ (or type

MiniMax-M2.7manually as the model name if the catalog has not refreshed). - Run

openclaw configureand pick theminimaxprovider. - Sign in via OAuth (the OpenClaw MiniMax OAuth flow runs through

minimax-portal-auth), a MiniMax Coding Plan token, or a raw API key inopenclaw.json. OAuth is what I run.

Self-hosting is a separate story. Full BF16 weights are 457GB; the UD-IQ4_XS quant runs 108GB on a 128GB Mac. Below Q4 the GGUFs degrade noticeably.

If you'd rather run a MiniMax M2.7 download locally, UD-IQ4_XS at 108GB is the floor. For most users the unified memory floor for OpenClaw is a separate calculation, and the answer is the API.

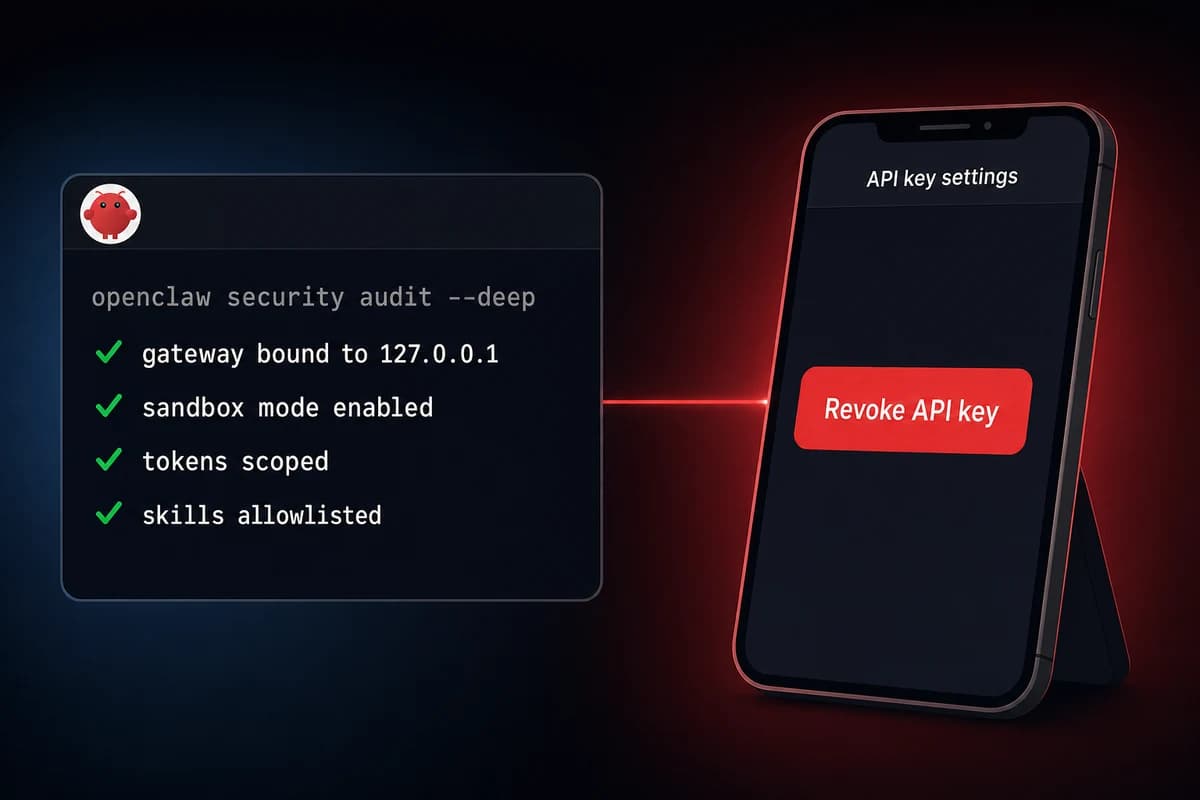

Verdict: when M2.7 in OpenClaw makes sense

Use M2.7 for agentic coding where Claude Opus 4.6 in OpenClaw is too expensive and the verbosity tax is acceptable. My testing landed here: capable as a Sonnet replacement; rough edges on Opus-tier work.

Skip it when you need Opus-tier reasoning, when latency matters and you cannot pay for highspeed, or when you ship commercial product without authorization for the Modified-MIT terms.

MaxClaw locks you to MiniMax models. OpenClawVPS keeps you BYO-model, useful when the answer is to mix GPT for tasks where OpenAI wins with M2.7 where it wins. The wider set of open OpenClaw alternatives is worth knowing if the lock-in math changes.

The setup I run now

Pro-tier VPS, OpenClaw 2026.1.13, OAuth into MiniMax via the portal-auth plugin, M2.7 on standard tier for the everyday coding loop, Opus 4.6 routed in for hard problems. Under $80/month for my volume.