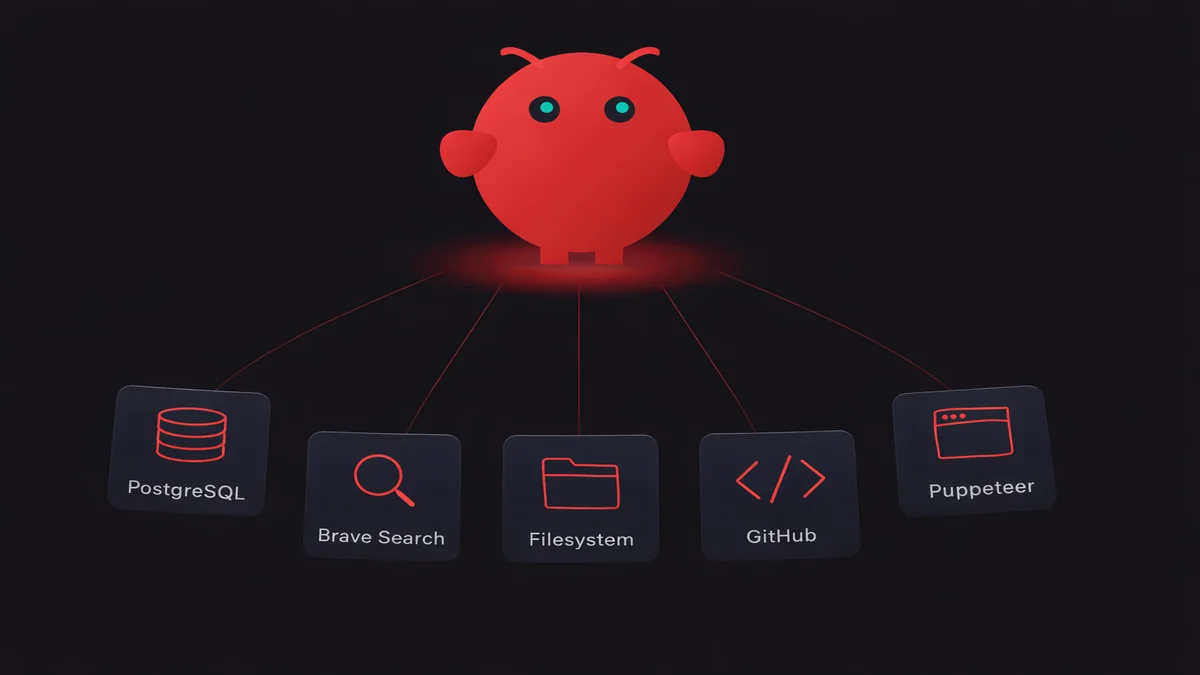

TL;DR: You add MCP servers on OpenClaw through one config file: ~/.openclaw/openclaw.json. Drop a server entry under mcpServers, restart the gateway, done. Your agents get instant access to databases, file systems, search engines, APIs. I run 12 and route different ones to different agents. The 5 worth adding first: filesystem, Brave Search, PostgreSQL, GitHub, and memory.

My OpenClaw agents could chat, follow instructions, and use skills. Useful enough to replace my AI assistant subscriptions. But they couldn't touch anything outside their own context. No file access. No database queries. Smart but stuck in a box.

Once I learned how to add MCP servers on OpenClaw, that changed overnight. MCP (Model Context Protocol) is a standard that lets your agents call external tools through a JSON-RPC interface. USB-C for AI, basically. One protocol, over ten thousand published servers and growing. Claude, ChatGPT, Cursor, VS Code, Gemini — they all speak it now. Your agent doesn't care which one it's talking to. This kind of interoperability is what sets OpenClaw apart—for a comparison with other platforms, check out the full analysis.

I started with one MCP server (filesystem) and now run 12 on a single VPS. One of those servers ate 6 GB of RAM over a weekend before I caught the leak. I'll tell you which one later.

The whole setup lives in one config file. About 60 seconds to add each server.

Add your first MCP server on OpenClaw in 60 seconds

- 1Drop a server entryAdd command, args, transport to ~/.openclaw/openclaw.json under mcpServers↓

- 2Restart the gatewayRun openclaw gateway restart so the child process spawns with the new config↓

- 3Verify with mcp listopenclaw mcp list shows the server status, tool count, and transport

Every MCP server gets a single entry in ~/.openclaw/openclaw.json. That's the same config file where your models and agents live.

Smallest working example, the filesystem server:

{

"mcpServers": {

"filesystem": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-filesystem", "/home/openclaw/data"],

"transport": "stdio"

}

}

}Three fields. command points to the binary that starts the server — usually npx or node. args is where you pass the package name and any server-specific config (in this case, the directory it's allowed to access). And transport? Almost everything uses stdio right now, so you'll rarely change that one.

Save the file. Restart the gateway:

openclaw gateway restartVerify it picked up:

openclaw mcp listSERVER STATUS TOOLS TRANSPORT

filesystem running 11 stdio

11 tools. Read file, write file, create directory, move, search. Your agents can call all of them immediately.

Under the hood, OpenClaw spawns the MCP server as a child process. Communication happens over stdin/stdout using JSON-RPC 2.0. The server stays alive as long as the gateway runs. No HTTP ports. No network exposure. Local servers don't even need an auth layer.

The full config I actually use

I didn't plan to add twelve MCP servers on OpenClaw. It crept up over two months. I'd hit a wall where an agent couldn't reach something, add a server, and move on. Repeat twelve times.

{

"mcpServers": {

"filesystem": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-filesystem", "/home/openclaw/data"]

},

"brave-search": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-brave-search"],

"env": { "BRAVE_API_KEY": "${BRAVE_API_KEY}" }

},

"postgres": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-postgres", "postgresql://localhost:5432/mydb"]

},

"github": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-github"],

"env": { "GITHUB_PERSONAL_ACCESS_TOKEN": "${GITHUB_TOKEN}" }

},

"memory": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-memory"]

},

"git": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-git"]

},

"obsidian": {

"command": "npx",

"args": ["-y", "obsidian-mcp-server", "--vault", "/home/openclaw/notes"]

},

"puppeteer": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-puppeteer"]

},

"sqlite": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-sqlite", "/home/openclaw/data/local.db"]

},

"fetch": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-fetch"]

},

"slack": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-slack"],

"env": { "SLACK_BOT_TOKEN": "${SLACK_BOT_TOKEN}" }

},

"sequential-thinking": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-sequential-thinking"]

}

}

}My 12-server setup

Total: 5,200 tokens of tool overheadToken overhead matters. Each server injects tool descriptions into your agent's context window. Memory is light at ~200 tokens. GitHub is heavy, 800+ tokens for its 30+ tools. My 12 servers eat roughly 5,200 tokens before anyone types a word. That's real cost on every message, and it compounds when you're running agents with smaller context windows.

Not all of them are equally stable either. Filesystem and memory have never crashed on me. Git and fetch are solid. Puppeteer? That's the memory leak I mentioned. It spawns a headless Chromium instance that sometimes fails to clean up after itself. I was restarting it every 48 hours before I finally wrote a cron job. Worth it for browser automation, but you'll babysit it. (The browser relay avoids this entirely by controlling your real Chrome instead of spawning headless instances.)

Running MCP servers on your own VPS means monitoring uptime, restarting crashed processes, debugging at odd hours. OpenClaw VPS runs your MCP servers with process monitoring and auto-restart built in. Plans start at $19/month.

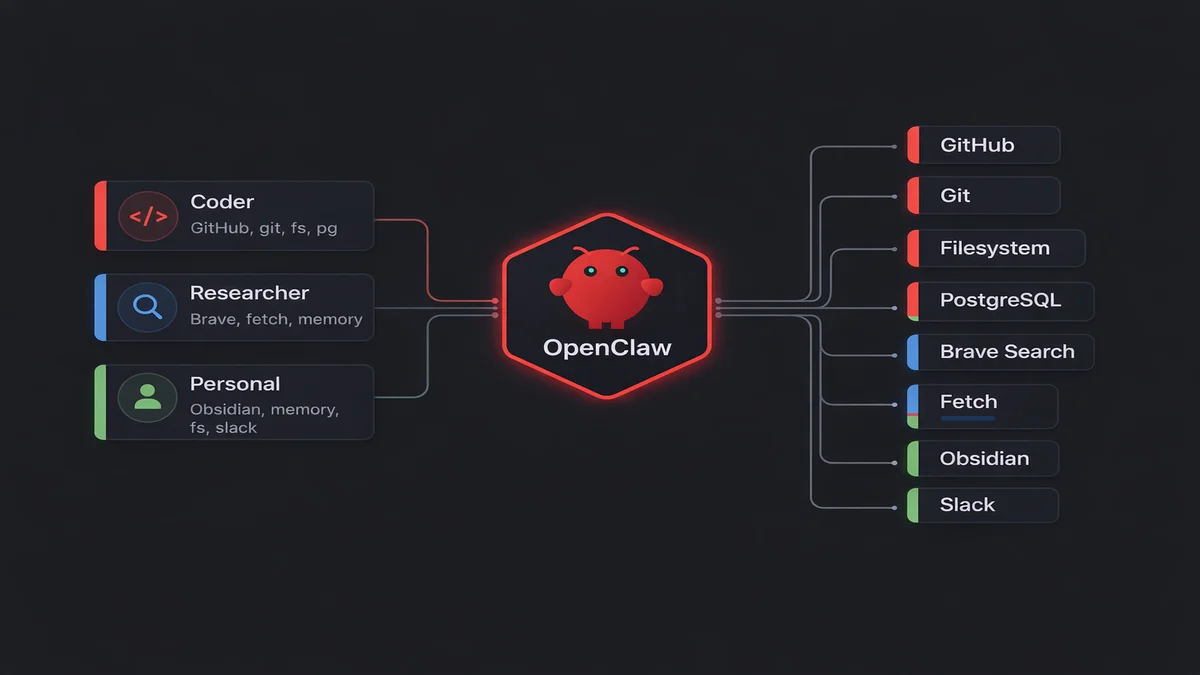

Route different servers to different agents

Most MCP guides skip this part. They shouldn't.

If you're running multiple agents, you probably don't want every agent talking to every server. Why would my coding agent need Obsidian? And my personal agent has no business touching the production database. Principle of least privilege, same as any other system.

Per-agent routing lives in the same openclaw.json, under each agent's config:

{

"agents": {

"coder": {

"mcpServers": ["github", "git", "filesystem", "postgres"]

},

"researcher": {

"mcpServers": ["brave-search", "fetch", "memory", "sequential-thinking"]

},

"personal": {

"mcpServers": ["obsidian", "memory", "filesystem", "slack"]

}

}

}

When an agent has mcpServers defined, it only sees those servers. No override? It inherits the full global list.

Security is one reason, but the real win is performance. Fewer servers per agent means fewer tool descriptions stuffed into the context window. My researcher agent runs with ~1,800 tokens of tool overhead instead of 5,200. Responses come back faster, cost less, and the model stops getting confused trying to pick between 80+ tools.

Environment variables and API keys

Half of my servers need API keys. Brave Search needs BRAVE_API_KEY, GitHub needs a personal access token, Slack wants a bot token. PostgreSQL just needs a connection string.

You can handle this two ways. The fast way is inline in openclaw.json:

{

"env": { "BRAVE_API_KEY": "BSA-abc123def456" }

}The right way is system environment variables:

export BRAVE_API_KEY="BSA-abc123def456"Then reference it in the config with ${BRAVE_API_KEY}. OpenClaw resolves the variable at startup.

Go with system env vars. openclaw.json ends up in backups, gets copied during migrations, sometimes gets committed to git by accident. I've done all three. API keys in plaintext config files are a liability.

I keep all my MCP keys in /etc/openclaw/env and source it in the systemd service file. One location, one place to rotate keys. The config file stays clean enough to share.

The mistake everyone makes at least once: setting the env var but forgetting to restart the gateway. OpenClaw reads environment variables at startup. Once. Change a key? Restart.

openclaw gateway restartThe 5 MCP servers I'd add first

Read, write, search files. Zero config, zero API keys

- Tokens

- ~400

- Auth

- None

Free web search, 2,000 queries/month on free tier

- Tokens

- ~200

- Auth

- BRAVE_API_KEY

Persistent recall. Agent remembers across sessions

- Tokens

- ~200

- Auth

- None

Natural-language SQL against your databases

- Tokens

- ~450

- Auth

- Connection string

Issues, PRs, file reads, repo search across repos

- Tokens

- ~800

- Auth

- GITHUB_TOKEN

Don't add 12 MCP servers on day one. Start with 5. These are the ones that actually changed how I work.

Filesystem is the obvious first pick. Zero config, zero API keys. Your agent can read and write files, search directories, move things around. I use it for everything from parsing logs to generating nginx configs. npx -y @modelcontextprotocol/server-filesystem /path/to/data and you're done.

Brave Search gives you free web search. 2,000 queries/month on the free tier, which sounds like a lot until your research agent burns through 15-20 in a single session. Still way cheaper than Google Custom Search API. Grab a BRAVE_API_KEY from brave.com/search/api.

Then memory, and honestly this one changed the most about how I work. Without it, your agent forgets everything when the session ends. Gone. Project context, user preferences, ongoing task state — all wiped. Memory fixes that. No API key needed.

I added PostgreSQL because I got tired of writing SQL by hand in pgAdmin. My coding agent handles queries against our dev database now. Natural language in, query results out. Just needs a connection string pointing at your database.

GitHub was the last of the five and maybe the one I'd miss most if I had to drop one. Issues, PRs, file reads, repo search. My coding agent creates issues from chat, opens draft PRs, reads code across repos. I haven't touched the GitHub web UI for routine stuff in weeks. Needs a GITHUB_PERSONAL_ACCESS_TOKEN with repo scope.

All five took under two minutes each. Combined token overhead: ~2,400 tokens. That's manageable even on smaller context windows.

Build and add a custom MCP server

The community has published over 10,000 MCP servers at last count, across registries like mcp.so, Glama, and the official MCP Registry. Docker alone catalogs 270+ servers with container isolation built in. But sometimes you need something that doesn't exist as a package yet. An internal API, a proprietary database, some weird workflow specific to your team.

A bare-bones MCP server in TypeScript is about 30 lines:

import { McpServer } from "@modelcontextprotocol/sdk/server/mcp.js";

import { StdioServerTransport } from "@modelcontextprotocol/sdk/server/stdio.js";

const server = new McpServer({

name: "my-custom-server",

version: "1.0.0"

});

server.tool("get_status", "Check the current system status", {}, async () => {

const status = await fetchInternalStatus(); // your logic here

return { content: [{ type: "text", text: JSON.stringify(status) }] };

});

const transport = new StdioServerTransport();

await server.connect(transport);The Python SDK is equally compact if TypeScript isn't your thing. pip install mcp, slap a @mcp.tool() decorator on a function, done. The SDK infers parameter schemas from your type hints and docstrings, so you skip the manual Zod definitions. Same server, either language, MCP clients can't tell the difference.

Build it, point openclaw.json at it, restart the gateway. Your agents can call get_status like any other tool.

Test before you deploy:

openclaw mcp test my-custom-serverThis spins up the server, lists available tools, makes a test call, then shuts down. Much faster than chatting with your agent just to see if the tool registered.

For deeper debugging, the MCP Inspector is worth knowing about. Run npx @modelcontextprotocol/inspector node build/index.js and you get a browser UI at localhost:6274 showing all registered tools, their schemas, and the raw JSON-RPC traffic. It also has a CLI mode for automation. I use it whenever a tool's parameter schema doesn't look right to the model.

Troubleshooting MCP servers that won't start

Every error I've hit, compressed into what actually fixed it.

"spawn npx ENOENT"

Node.js isn't in your PATH when OpenClaw spawns the process. Common if you installed Node through nvm, fnm, or asdf. The shell profile that sets the PATH never runs in the subprocess context. Fix: use the full path to npx.

{

"command": "/home/openclaw/.nvm/versions/node/v22.4.0/bin/npx"

}On Windows, the fix is different. Replace npx with cmd /c npx since Windows can't resolve bare npx in a subprocess without a shell wrapper. This one issue has 19,000+ views on Stack Overflow — you're not alone.

"MCP server exited with code 1"

Almost always a missing API key or bad arguments. Check the server's output:

openclaw logs --mcp --server brave-search --tail 50Nine times out of ten it's a missing environment variable. The error usually tells you which one.

Server starts but tools don't appear

Transport mismatch. The server expects HTTP, you configured stdio (or the other way around), and the connection opens but the tool handshake fails silently. Annoying to debug because there's no error. Most community servers use stdio. Check the README.

Memory leak (the Puppeteer story)

Puppeteer spawns Chromium instances for browser automation. After about 48 hours on my box, orphaned browser processes had accumulated to 6 GB of RAM. I only caught it because the VPS started swapping. The fix: set "headless": "new" in the Puppeteer args and add a cron job that restarts the server daily.

0 4 * * * openclaw mcp restart puppeteerDebugging MCP crashes at 2 AM gets old after the second time. OpenClaw VPS handles process monitoring, auto-restarts, log aggregation. You sleep instead.

What's next for MCP and OpenClaw

MCP moved to the Linux Foundation in December 2025. The Agentic AI Foundation (AAIF) governs it now, with platinum members including AWS, Anthropic, Google, Microsoft, OpenAI, Bloomberg, Block, and Cloudflare. That's not a bet on one vendor anymore. That's the entire industry agreeing on one protocol.

The November 2025 spec release brought a pile of new features. Task-based workflows let servers run long async operations and report progress back to the client. OAuth 2.1 with PKCE replaced the old auth mess, so connecting to remote servers with user accounts is actually standardized now.

An official extensions system also landed. Servers can advertise optional capabilities without breaking older clients. That matters more than it sounds — it means the protocol can grow without fragmenting.

One thing I want to try: code execution mode. Anthropic's engineering team published benchmarks showing that presenting MCP tools as code APIs (instead of raw tool calls) can cut token usage by up to 98.7%. Instead of 150,000 tokens of intermediate tool results, the model writes a short script that calls the tools directly. Cloudflare calls this "Code Mode." Not widely supported yet, but the token savings are hard to ignore.

65% of active OpenClaw skills already wrap MCP servers under the hood (something I covered in the skills article). You can browse them all in the skills marketplace. The line between skills and standalone MCP servers is blurry and getting blurrier. Skills give you behavioral instructions plus tool access. MCP servers give you just the tools. Same config files, same restart command.

The big change I'm watching: Streamable HTTP transport. Right now almost everything runs over stdio, which means the server has to be a local process on your machine. Streamable HTTP lets you connect to remote MCP servers over the network.

Cloudflare already has a full deployment path for this. You can spin up a remote MCP server on Workers with OAuth, container isolation, and a one-click deploy to their edge network. I've been testing one that wraps an internal API, and the latency is fine for everything except high-frequency tool calls. Once more clients add native Streamable HTTP support, running servers locally will start to feel optional.

Security is worth thinking about before that happens. The spec recommends OAuth 2.1 for remote servers, least-privilege API scopes, and rotating tokens every 90 days. Shadow MCP servers — unauthorized servers connected to your agents — are already a documented risk. Keep your openclaw.json locked down, audit what's actually running with openclaw mcp list, and pair it with the broader day-1 OpenClaw security defenses so the gateway itself is not the hole.

For now, stdio gets the job done. But the remote story is coming together fast.

Start adding MCP servers

Add one server to openclaw.json. Restart. Ask your agent to use it. That's the whole process. Every tool you add here works through Telegram and WhatsApp too, not just the dashboard. MCP servers even run on Android via Termux if you're using the proot-distro method.

If you'd rather skip the config files and server management, OpenClaw VPS comes with the top MCP servers pre-configured and managed. But self-hosting works fine. The config above is the exact one running on my box right now.